“Without contraries is no progression.” William Blake

1. The Owl of Minerva

History, according to the idealist philosopher, Friedrich Hegel, is not a disconnected jumble of accidents; rather, it is the purposeful and progressive ascent of the absolute spirit (Geist) to absolute knowledge. This ascent is achieved through a series of ideological clashes or contradictions. These contradictions are obstacles that discipline and propel thought (the spirit?) to a new stage, never allowing it to complacently settle. Without such contradictions, the spirit (thought?) would stagnate. Don’t ask me exactly what this really means, because I do not have the faintest clue, despite reading plenty of books with titles like “An idiot’s guide to Hegel,” or “Hegel for the metaphysically challenged.” However, grandiose (or obscurantist) metaphysics aside, Hegel’s argument that progress requires dynamic clashes between apparently contradictory ideas is appealing. In fact, I will try to convince you that Hegel’s philosophy is exactly correct, if, of course, we eliminate his rather preposterous metaphysics. Reason (a less metaphysical alternative to Hegel’s “Geist,” or “absolute spirit”) progresses only through opposition. Without opposition, reason becomes a swampy still water, a breeding ground for the poisonous flies of dogmatism and superstition. I will do this by arguing that (1) cognitive processes come in two broad varieties (fast and slow: system 1 and system 2); (2) most of our conclusions are reached through system 1 processes (fast, not deliberative); (3) reasoning evolved to facilitate argumentation, not to reach truth; and (4) only strong opposition can reliably compel us to change mistaken or misguided beliefs.

2. How one arrives—fast and slow

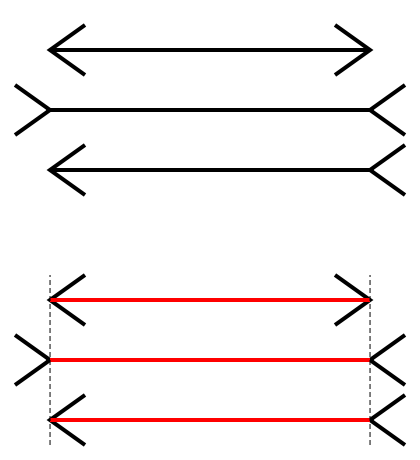

Most of us probably believe that the mind is like one of those spectacular grandfather clocks with a glass facade. We can look through it, as it were, and observe its internal machinery. We can know why we believe this or that simply by carefully introspecting about those beliefs. Psychologists and philosophers, however, have spent the last several centuries dismantling this intuitive image of the mind. Not only is the mind not transparent, but also it is not even unified. It is not a single substance or thing; rather, it is probably comprised of a number of specialized mechanisms designed to solve recurrent evolutionary problems. These seem to operate without conscious reflection and appear impervious to (conscious) cognitive input. For example, take the Müller-Lyer illusion, a well known perceptual illusion:

My guess is that you could tell yourself that these lines are the same length all day without altering your perception (they are the same length; check the red lines!!). They don’t seem the same length; they never will. Your perceptual system is like a intractable child—it will not listen to the stern rebukes of your consciousness telling it “but these are the same, damn it!”

To make things simple, we can say that the mind is composed of one reasonably flexible system (roughly: consciousness or the executive system, but not quite) and a series of relatively inflexible systems. To make things simpler, we can call the flexible system system 2, and we can call the entire bundle of inflexible systems system 1. System 1 is quick, dirty, and parlays “rules of thumb” called heuristics. System 2 is relatively slow, deliberative, and creative. To illustrate, consider this problem:

What is the answer to the following question:

Linda is 31 years old, single, outspoken, and very bright. She majored in philosophy. As a student, she was deeply concerned with issues of discrimination and social justice, and also participated in anti-nuclear demonstrations.

Which is more probable?

- Linda is a bank teller.

- Linda is a bank teller and is active in the feminist movement.

If you are like most people, you probably answered that the second option is more likely. If so, think about the problem more carefully. Option two is actually logically impossible because option one is contained in option two, and option two adds more, not less, information about Linda. If Linda is a bank teller and is active in the feminist movement, she is still a bank teller, and option one is still correct. Upon the other hand, if Linda is just a bank teller, then option two is incorrect. At most extreme, these two options could be equally likely (although that is highly implausible). Option two can never be more probable.

So what gives? Why are we so likely to pick option two despite its impossibility? According to Amos Tversky and Daniel Kahneman, who originally forwarded this example, we are prone to getting the question wrong because system 1 relies upon certain cognitive shortcuts called heuristics (Tversky & Kahneman, 1983). In this case, system 1 relies upon the representativeness heuristic. The description of Linda seems more representative of a feminist bank teller than of just a bank teller; therefore, system 1 spits out that answer. Kahneman and Tversky (see Kahneman, 2011) have documented many of the heuristics that system 1 relies upon, but they are not important here. What is important is that the brain might be composed of two (probably more, as noted) minds. One mind (system 1) is fast and uses shortcuts; the other (system 2) is more plodding but is capable of creative feats that escape the talents of the first.

System 1 cognition results in gut intuitions, intuitions that are difficult to shake. For example, I know the answer to the Linda problem from above; I have known it for a long time. And yet, some part of me feels very strongly that option two must be correct. Evidence suggests that these intuitions guide most of our beliefs, behaviors, and reasoning. In other words, we don’t calmly and dispassionately deliberate about problems, mysteries, puzzles, or moral dilemmas. We have intuitions. And these intuitions then conjure reason to plead, to explain, to persuade. We think our beliefs and conclusions are reached through this process:

Reason ——> Conclusion/Belief ——> Passion/Emotion/Commitment

But they are actually reached through this process:

Intuition/Passion/Commitment——-> Conclusion/Belief——->(Reason)——->Stronger Passion/Emotion/Commitment

Reason is not the general of a well-disciplined army who decides where and when the army will attack; reason is the public relations officer who justifies the actions of the army after they attack.

Consider another question—this time, a moral question:

Julie and Mark are brother and sister. They are traveling together in France on summer

vacation from college. One night they are staying alone in a cabin near the beach. They decide that it would be interesting and fun if they tried making love. At very leastit would be a new experience for each of them. Julie was already taking birth controlpills, but Mark uses a condom too, just to be safe. They both enjoy making love, butthey decide not to do it again. They keep that night as a special secret, which makesthem feel even closer to each other. What do you think about that, was it OK for them to make love?

If you are like most people, you do not think it was all right for them to make love. In fact, you think it was outrageous, disgusting, and very wrong. If you read this alone, then you might end your thought process there. It is disgusting and wrong. Nothing more needs to be said.

3. The public relations officer

But what if you read this in the context of a debate? What if the debate coach said, “This is what we will debate. You have to take the position that this is wrong.” What then? Well, then you would probably marshal the best possible argument you could. You wouldn’t be content to forward facile arguments such as “it is gross.” Why? Because you can run the simulation in your mind. You assert that it is wrong because it is gross. Slyly smiling, your opponent counters, “people often have the same response to homosexual relationships. Do you think those are wrong?” And your argument would sink. But, can you continue to defend the indefensible? And what is the point of reasoning in this case if you have already decided, via system 1, that incest is utterly wrong, and is utterly wrong no matter what precautions the incestuous participants used.

Haidt, Bjorklund, and Murphy (2000) asked the incest question from above, among others, to college undergraduates. After, they had the student engage in a discussion with an interviewer who was asked to play “the devil’s advocate.” Whatever answers the student gave, he was supposed to gently contradict them. The incest story elicited strong disapproval. Only 20% of the students originally said that it was all right for Julia and Mark to have sex. After their discussions with the devil’s advocate, several students changed their opinions and said that it was all right. Even so, the final group who said that the incestuous sexual encounter was all right was small. Some of the students were quite clever. They immediately noted that incest might cause genetic defects (see Haidt, 2012 for more details). “Yes, but the story indicated that they both used contraception.” At this point, some of the students noted that the sex might irreparably damage Julie and Mark’s relationship. “Yes, but the story indicated that it actually brought them closer together.” After forwarding and discarding a number of arguments, some of the students finally dropped all pretenses: it just seems wrong! Again, intuition appears to lead reason, which attempts, often defiantly, to defend whatever goal intuition desires.

So maybe some are incapable of defending the indefensible and changed their opinions after conversing with an opponent—but most students remained adamant that incest is just wrong*. These students still couldn’t really defend it. In the end, they simply asserted that it was wrong. But why did they defend it at all? Why did they not reason before arriving at a conclusion? And why not drop the moral outrage once deciding that, at the very minimum, it is very difficult to argue that consensual incest is a crime with an obvious victim? Well, according to Mercier and Sperber’s provocative suggestion (2011), reason did not evolve to guide us to the truth; rather, it evolved to equip us for persuading others. Reason is about arguing. We reason so that we can convince others that our beliefs are sound; and, just as importantly, we reason to protect ourselves against being manipulated by others. Reason is just one more tool in our armamentarium for changing the beliefs and behaviors of other people.

So, according to Mercier and Sperber, your original answer to the incest problem was probably not arrived at through reason. However, if you knew you would have to defend your belief about the problem, you would deploy reason to prepare a compelling case. And others would use reason to refute your case. This explains why we are quite good at discovering flaws in arguments with which we disagree, but relatively poor at discovering flaws in arguments with which we agree. When we are committed to some position or another, we are designed to protect ourselves against manipulation. We are designed to defend our commitment. This makes us skeptical of contrary opinions and warm to similar opinions. A good public relations officer doesn’t say to the press, “Yes. That is a good point Bob. We were wrong. We hereby change our opinion.” Instead, she comes equipped with good rebuttals to possible arguments. When she runs out of good rebuttals, she, like the students in the Haidt et al. experiment, simply shrugs her shoulders and says, “We are just right because we are.”

4. Cognitive dissonance, scientific institutions, and progress

Okay. So far we have learned that Freud was reasonably right about at least one thing. Many of our cherished thoughts, beliefs, attitudes, and opinions are the output of the impenetrable machinery of our system 1 mind. We did not carefully reason about them. We had an intuition, a feeling, and that was good enough. If we did reason about them at all, we probably only did so to anticipate possible objections to them. Our rational mind is not an impartial arbiter; rather, it is an advocate, a dedicated votary of our intuitions. We don’t care about truth. We care about convincing and manipulating others.

But, if we are all irrational and passionate creatures, clashing like ignorant armies in the night, then how can we possibly achieve knowledge? Well, Hegel argued that it was precisely this passionate clashing that allows progress. And, as I noted, I agree. This because of two important things. First, we have a desire for cognitive consistency, especially when presenting ourselves to others. And second, we can create institutional structures that incentivize truth, that pay the passions for submitting to facts and data.

In his excellent book “The Expanding Circle,” Peter Singer argued that reason might allow for genuine moral progress (1981). It evolved because it helped us survive and reproduce (possibly by manipulating others). However, once it evolved, it developed a life of its own. When we use reason, we enter and ride an escalator. It may take us beyond survival and reproduction and into loftier regions of philosophical exploration and truth, allowing for both moral and scientific progress. Is this somewhat majestic vision of reason (at least compared, say, to the view of reason espoused by irrationalists) congruent with Mercier and Sperber’s arguments? I think so.

Reason creates a demand for consistency (which is effective for thwarting others’ attempts at manipulation). Social psychologists have long noted that holding two incongruent beliefs or behaviors causes mental tension, called cognitive dissonance. If you believe that rain makes people wet, it is difficult to believe also that it makes people dry. Suppose you saw a woman standing in a rainstorm without an umbrella. You were watching her form under a balcony. She walked under the balcony to ask you a question, and you noticed she was completely and utterly dry. Not a bead of water. You would probably be shocked and disconcerted. Did you really see that? Was it really raining? Your cognitive systems (both 1 and 2) desire some consistency. It is alarming when consistency is challenged.

Some have argued that this desire for consistency is more about self-presentation than about cognition (Baumeister, 1982). So, if we were left alone on an Island, we would not experience intense cognitive dissonance. Nobody would call out our inconsistencies, and we would be blissfully unaware of the incongruities in our cognition. There are thirty gods. There is only one god. Doesn’t really matter. Nobody would object. We could believe both things at the same time. This is consistent with Mercier and Sperber. We are designed to spot inconsistencies in another person’s attitudes and behaviors to protect ourselves from possible manipulation.

Suppose, for example, that a cult leader rails against the sin of sexual relations while having myriad sexual affairs with his followers. It would be useful to note this hypocrisy and to resist the cult leader’s ideology—which is designed to thwart your reproductive fitness while enhancing his! A plausible corollary is that we should be sensitive to accusations of hypocrisy or inconsistency. If we want to convince others that our moral system is the right moral system, we, at the very least, should adhere to it. None of this matters if we are alone. The same holds for a favored hypothesis. To really convince others that a theory is correct, one must show that it is entirely consistent with the evidence. If it is not and opponents are allowed to assail it, then it will be replaced by a better theory. This is an important if.

If someone is not calling out an inconsistency or flaw in a belief, opinion, or theory, then most of us will just keep believe what our intuitions or what previous commitments tell us. And it is truly astonishing what humans will believe when the jarring notes of dissonance are silenced by force or custom. Without contraries, as Blake noted, there is no progress. We become stultified and complacent. Opposition forces us to confront contradictions and to change our beliefs or theories if they cannot assimilate such contradictions.

This process works better at the institutional level. As I have noted, and as we all know, individuals don’t often change their minds. However, uncommitted observers do. Max Planck, the great physicist, noted “science progresses one funeral at a time.” The old generation, devoted to its theory, refuses to listen to the arguments of the new generation. But they die. And the institution of science perseveres. And the best way to achieve status in science is not to adhere to dying dogmas; it is to forward a new theory, one that better explains the available data. We have, then, created an institution that incentivizes the pursuit of truth. Scientists might just check their immediate intuitions because it actually pays to be right. Of course, plenty of scientists will continue to support flawed theories. But they will be replaced by other scientists who forward new (and also probably flawed!) theories.

5. Conclusion: Seeking contradictions

Hearing an opinion that is different from one’s own is often irritating. Our immediate reaction is often to question the person’s sanity or motives. How could a sane and decent person possibly oppose higher taxes? How could a sensitive and tolerant person possibly oppose affirmative action? How could a morally scrupulous person oppose abortion? How could a reasonable person believe that men and women are biologically different? Because of this, we often surround ourselves with people who share our moral and ideological worldviews. We click our favorite websites, and ignore the “bad” websites where “bad” people espouse stupid beliefs that contradict our own. If the argument in this blog is correct, however, this common practice is almost guaranteed to stunt intellectual growth. Rather than avoid opposition, we should seek it out. If we are conservative, we should read Mother Jones, the Nation, and the New York Times; if we are liberal, we should read the National Review, the Wall Street Journal, and the Weekly Standard.

We will never be disembodied agents contemplating the truth without passion or prejudice. We are not manifestations of the world spirit. Progress is not a metaphysical inevitability. However, if we invite contradictions and opposition into our intellectual lives, if we demand that others assail our favorite theories and opinions, if we click on those “stupid” websites where people fatuously disagree with our preferred politics, then we might progress, both intellectually and morally.

* It is, of course, possible that the incestuous relationship is wrong. However, I haven’t yet heard a good reason. And I have asked many people.

References

Baumeister, R. F. (1982). A self-presentational view of social phenomena.Psychological bulletin, 91, 3.

Haidt, J. (2001). The emotional dog and its rational tail: A social intuitionist approach to moral judgment. Psychological Review, 108, 814-834.

Haidt, J. (2012). The Righteous Mind: Why good people are divided by religion and politics. New York: Pantheon.

Haidt, J., Bjorklund, F., & Murphy, S. (2000). “Moral dumbfounding: When intuition finds no reason.” Unpublished manuscript, University of Virginia.

Kahneman, D. (2011). Thinking, fast and slow. New York: Macmillan.

Mercier, H., & Sperber, D. (2011). Why do humans reason? Arguments for an argumentative theory. Behavioral and brain sciences, 34, 57-74.

Singer, P. (1981). The expanding circle. Oxford: Clarendon Press.

Tversky, A., & Kahneman, D. (1983). Extensional versus intuitive reasoning: The conjunction fallacy in probability judgment. Psychological Review, 90, 293-315.